The Hardware Renaissance

Hardware is back in fashion. It matters more than ever to get this wave right.

Dear friends,

For the longest time now I have been itching to write my impressions and thoughts on the upcoming technological potential of hardware. I am, of course, not myself immune to the brilliant digital products and quality-of-life betterments software companies have produced over the past two decades, nor will I argue here that software is about to be rendered useless, or otherwise consigned to the past tense. It is a human phenomenon, however, to side with the underdog, or, allegorically, to help David pull his slingshot back just a tiny bit harder against Goliath. In the context of venture capital and the private markets, hardware has been a gargantuan underdog, and an onlooker, for at least a few decades.

Reasons for that are ample, and, in all fairness, pretty good ones. “Hardware is hard” is a buzzy statement, a favorite one for many Patagonia-vest-wearing analysts ready to dazzle the poor hardware founder pitching them, but a true one nevertheless. Hardware is bloody hard. It is, after all, a synthesis of mechanical, electrical, materials, computing, and several other engineering disciplines. Any one of those might take decades, and potentially a prestigious degree, to master. Patience is in ever-shorter supply today, hardware is notoriously slow, and on top of that, we have started running into certain hard ceilings of physics itself. The growing disinterest among both investors and builders was, for a long stretch, entirely natural to expect.

We are, however, entering very different times. The most evident force pulling hardware back into the front of the conversation is the AI revolution, which, as it turns out, is constrained by none other than hardware itself. The intelligence we are building needs a body, the body needs power, and the power needs an entire substrate of chips, sensors, motors, batteries, and energy infrastructure that does not assemble itself.

In the words of Sequoia Capital’s Shaun Maguire, “Every software revolution had to be preceded by a hardware one,” and this one will be no different. There is, on top of that, a less universal but no less pressing motivation closer to home. Europe has not fully worked out how it wants to govern itself, or what role it wants to play, and in the meantime an arbitrage of enormous size has opened up for the companies willing to build the bricks of European sovereignty. Hand on heart, we are not, at the moment, sovereign in any meaningful macroeconomic or geopolitical sense, and a sizable portion of that arbitrage will be fulfilled by hardware innovation, particularly in defence, energy, and security.

To further put my beliefs to test, I had the pleasure of conversing with my friend Ytsen van der Meer, a partner at Golden Egg Check, the most active early-stage VC firm in the Netherlands, and an ex-principal at Cottonwood Technology Fund, one of the world’s leading hardware VC funds. Many thanks, Ytsen!

Short on time? Here is why hardware deserves your attention again:

AI is becoming hardware-bound. Frontier models have outrun the containers we currently give them. It needs better chips, sensors, robots, data centers, and power systems that make intelligence usable in the physical world.

Geopolitics has made physical capacity strategic again. In a world of sanctions, export controls, trade blocs, and war, software alone is not enough. Countries and companies that cannot compute, power, manufacture, defend, and repair their own systems are not truly sovereign.

Software moats are eroding. AI is making code easier to produce, software markets more crowded, and many application-layer products easier to copy. Hardware’s bloody difficulty, once its main weakness, is becoming part of its defensibility.

Hardware moats are brutal once they work. The best hardware companies rarely sell just one line of product. They build IP, supplier networks, certifications, manufacturing knowledge, and components that can be reused across entire product families.

The market has not fully repriced this yet. Many investors still treat hardware as the eccentric exception, while paying aggressive prices for software companies in increasingly saturated categories. That mismatch is where the opportunity may be.

“My partner Chris Dixon describes our job as VCs as investing in good ideas that look like bad ideas. If you think about the spectrum of things in which you could invest, there are good ideas that look like good ideas. These are tempting, but likely can’t generate outsize returns because they are simply too obvious and invite too much competition that squeezes out the economic rents. Bad ideas that look like bad ideas are also easily dismissed; as the description implies, they are simply bad and thus likely to be trapdoors through which your investment dollars will vanish. The tempting deals are the bad ideas that look like good ideas, yet they ultimately contain some hidden flaw that reveals their true “badness.” This leaves good VCs to invest in good ideas that look like bad ideas—hidden gems that probably take a slightly delusional or unconventional founder to pursue. For if they were obviously good ideas, they would never produce venture returns.”

— Scott Kupor, “Secrets of Sand Hill Road”

There is a particular form of cognitive dissonance available to anyone watching the artificial intelligence industry from any reasonable vantage point in 2026. The current generation of frontier models can, allegedly, pass the bar exam, draft a passable Wittgensteinian dialogue, diagnose a melanoma from a phone-camera photograph (at roughly the level of a board-certified dermatologist, after which, of course, it will not actually be able to walk you to the clinic), and write 90% of the code that the world’s best software engineers do.

The same models obviously, cannot pour themselves a glass of water, or, in any literal sense, see the page they’re reading, the room they’re sitting in, or the hand of the person typing into them (very eager to uncover how AMI Labs approaches this issue, more on that in the next edition). The most cognitively powerful artifacts ever produced by our species are physically completely helpless, and the discrepancy between what they know and actually can do widens by the day.

This is, as Mario Gabriele has memorably put it, a puddle of soupy intelligence being poured into the old shapes. The phone, the laptop, the rectangular tablet, the cloud-hosted chatbot window: every container we currently have for synthetic cognition was designed by engineers solving a very different problem, in some cases ten to fifteen years before the cognition left the research papers. To put it brazenly, intelligence has outrun the body, and the body is, awkwardly for many involved, hardware.

More so, the structural advantages that used to make hardware a worse business than software, those of capital intensity, protracted iteration, and ephemeral moats, have slowly begun to invert; and the next set of platform-defining companies will be founded on top of physical and design breakthroughs and innovations that have not yet happened. Most of the people best positioned to build them are, at the moment, busy building variations on a chatbot or AI agents running around for you, trying to capitalize on an extremely saturated market.

It is for some of these reasons that the upcoming decade in technology will, very specifically, be a hardware decade. Obviously not exclusively, and not at the expense of many interesting and great software businesses we’ll come to see in that same decade. But disproportionately, structurally, and to a degree that almost no one in the modern venture industry (at least, of generalist VCs) has yet had the heart to reprice into their portfolios.

“It will not be a zero-sum game. So are there going to be super attractive opportunities in hardware in the next few decades? 100%. Are they also going to be there in the AI application layer? 100% as well. Then the question we should probably be asking ourselves is where do we see the best chance of generating outsized returns? And there I think I personally probably lean a bit more towards the hardware facing end as opposed to the software facing end for multiple reasons…”

— Ytsen van der Meer

“Every Software Revolution is Preceded by a Hardware One”

For pretty much the entirety of anthropological history, “technology” meant something tangible, created or crafted in order to manipulate physical human environment, something one could pick up or lean on, hold in the hand, and put back down again, get closer to, or get farther away from. Think of a flint axe, a compass, a printing press, a steam engine, a transistor radio, or a telephone. Logic held, staunchly, for about 10 thousand years or so. Then, in the span of roughly two to three decades, a peculiar thing happened. A generation of founders, investors, and technologists came to believe that the primary value creation resettled from the physical to the digital. They were not, in any literal way, wrong. Software ate the world, as the famous formulation goes.

That course of history hauled in its profits and its wins and its benefits, and squeezed the opportunity for everything it had, like a lemon on a hot summer afternoon. But somewhere along the way, the same course also came to assume that the physical world had been replaced by software outright, as if everything reachable in the physical realm had already been reached, and only software could accelerate us further. Or rather, they left the “hard” investing to the others, presumably “specialists”.

To be clear, this was an extraordinarily fruitful and magnificent period of value creation. But it was also, on a longer arc, a historical anomaly. Software merely abstracted the physical world for a time, creating a layer of value that floated on top of it, and depended at every moment on a vast and growing infrastructure that someone, somewhere, had to design, manufacture, and maintain.

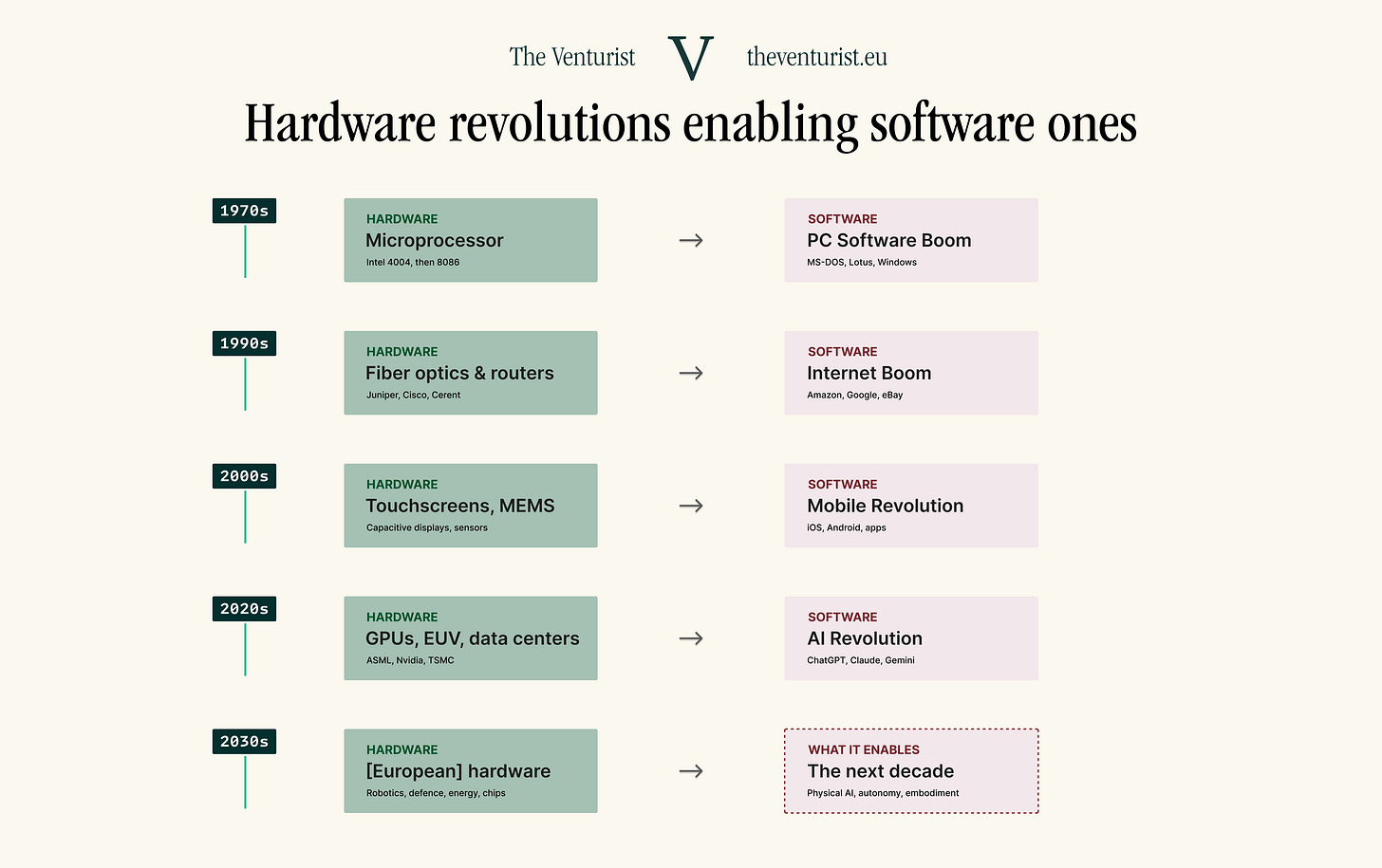

The AI revolution, for all its emphasis on algorithms and architectures, is at its foundation a hardware revolution. It is not possible to train a frontier model without tens of thousands of GPUs manufactured by TSMC on ASML lithography equipment, nor is it possible to run inference workloads at scale without data centres that consume as much electricity as small nations. It is neither possible to deploy AI in the physical world without sensors, actuators, robots, drones, satellites, and vehicles. The intelligence is in the software, and the power is in the hardware. As mentioned previously, Shaun Maguire put the rule plainly: every software revolution was preceded, enabled, and ultimately defined by a hardware one.

For an App Store, that lustrous triumph of late-2000s software, to exist at all, it had to be preceded and made possible by a piece of hardware, namely the iPhone. For the iPhone itself to exist, the long, patient gestation of two decades’ worth of hardware progress had to happen ahead of it, at Qualcomm, at Broadcom, and across the cellular networks of a generation almost forgotten by the time the App Store was eventually launched.

Modern AI follows the same script, almost word for word. The capabilities of today’s frontier models rest on the deep-learning breakthroughs of the 2010s, which themselves rested on a GPU industry that, almost incidentally, had spent two decades making parallel computation cheap enough to experiment with at scale. Cloud computing, before any of it, rode the long, almost invisible march of memory chips and CPUs into commoditisation.

The PC software boom of the 1980s rode the microprocessor. The internet boom of the 1990s rode fibre optic cables, routers, and server racks. The mobile revolution of the 2010s rode capacitive touchscreens, MEMS accelerometers, lithium-ion batteries, and cellular baseband chips. Each of these waves of software value valorized on what was made possible by dozens of hardware miracles that happened first.

The pattern, when laid out as a series rather than single or separate observations, becomes pretty meaningful. For roughly fifty years now, the centre of gravity in technology has alternated, with a reasonable regularity, between “bits” and “atoms”. The empirical proof, conveniently for our argument, can be found inside the portfolio of a single firm.

For the first twenty-five years of its existence, Sequoia Capital, which, just saying, has in effect written the unspoken handbook for the modern venture industry, made nearly all of its money in hardware. Apple, Cisco, 3Com, Nvidia, Atari, the list goes on. For its second twenty-five years, the same firm made nearly all of its money on software. Google, WhatsApp, Stripe, Airbnb, ServiceNow, the list, again, goes on. The shift between those two periods took roughly the duration of a single human career.

The entire modern venture apparatus, with its preferred metrics, its acceptable loss curves, and its consensus model of what a good outcome looks like, was constructed almost entirely around the second half of it. There is now a complete generation of capital allocators who, for their entire working lives, have treated software as the default asset class, and hardware as the eccentric exception. The case is particularly pronounced among European venture firms, which stand younger than their American counterparts and belong, almost without exception, to that second half of investing history.

Pendulum Swings Back

The pendulum is swinging back now, and if I counted right, it’s moving towards hardware now. The swing has multiple forces of momentum.

First, several previous generations of software are maturing, all at the same time. The mobile cycle, which began with the iPhone and produced App Store, WhatsApp, Uber, Instagram, Bolt, Spotify, and TikTok, has stopped throwing off new platforms. The cloud cycle, which began with AWS and consolidated around a meager handful of hyperscalers, has stopped throwing off new categories.

SaaS revenue multiples on the public markets have crashed precipituously, from a 2021 median of 18x to 3.4x in early 2026, with a clean bifurcation between AI-native platforms with deep workflow ownership trading at 10x or more, and undifferentiated horizontal SaaS hovering at 2-4x. The market is no longer willing to pay for software-ness as a category. It pays for either incumbency, or for AI-native defensibility, and the fraction of new entrants able to plausibly claim either is vanishingly small.

Second, the AI revolution itself is now hardware-bound, and would remain so even if it succeeded tomorrow. The hypothetical here is worth running through in plain English. Suppose, tomorrow morning, Anthropic, Open AI, Mistral AI, or Google DeepMind announce, with credible benchmarks, that they have produced a model capable of performing, robustly, and at human cost, every cognitive task a knowledge worker in any industry could perform in a day.

AGI is achieved. The press conferences are held, markets convulse, and within an hour, every Fortune 500 company is pulling their sleeve asking how to use it. The first thing the world would discover, within roughly forty-eight hours, is that there is nowhere near enough compute on the planet to actually run it for any meaningful number of users. AGI, in any plausible operational definition, has to be served from gigawatt-scale data centers, and:

The world currently contains roughly a dozen of those, generously counted.

Each one takes about 18 to 36 months to build, permit, and connect to power grid.

The power grids in question, in most Western countries, are already running at or near capacity.

Bringing AI down from gigawatt to megawatt scale is the kind of efficiency gain that requires a 100x or a 1000x improvement in compute density.

That 100x to a 1000x improvement has to come from somewhere — whether silicon photonics (a very intriguing field), optimised chips (not bullish on this one), better interconnects, more efficient memory, more compact power electronics, or something completely else, but certainly — hardware-related.